Bank data is fragmented. Pipelines break.

Access structured bank transaction data via FCA-authorised Open Banking APIs.

Financial data ingestion is the process of collecting, transforming, and loading transaction data from source systems into the platforms and workflows that use it.

In accounting SaaS, ERP platforms, and Lawtech tools, the source system is almost always a bank. And bank data is not designed for reliable ingestion.

It arrives in inconsistent formats. It contains truncated merchant descriptions. It comes through scheduled connections that batch rather than stream. It varies across banks, across payment types, and across time.

The ingestion pipeline can be correctly built and still break – because the data source is fragmented by design.

At Finexer, I work with engineering and product teams building financial data pipelines on top of bank data. The pipeline failures they encounter almost never originate in the processing logic. They originate in the source.

TL;DR

Financial data ingestion process reliability depends on the quality of the bank data source, not just the pipeline architecture. Raw bank transaction data is inconsistent across institutions – different description formats, varying merchant identification, and delayed delivery through scheduled connections. Finexer’s FCA-authorised Open Banking APIs provide structured, enriched bank transaction data with real-time webhook delivery – giving financial data ingestion pipelines a reliable, consistent source.

Key Takeaways

What is the financial data ingestion process?

Financial data ingestion is the process of extracting transaction data from source systems – typically banks – and delivering it into accounting, ERP, or analytics platforms.

In financial services, ingestion must handle real-time delivery, consistent schemas, and enriched transaction metadata to support downstream reconciliation and reporting workflows.

Why do bank data pipelines fail in financial platforms?

Bank data pipelines fail because source data is inconsistent. The same bank returns different transaction description formats across payment types. Merchant identification varies across institutions. Scheduled connections create timing gaps that leave transactions missing until the next polling cycle.

What makes bank transaction data difficult to ingest reliably?

Bank transaction data is generated for settlement purposes, not accounting readability. Descriptions are truncated. Merchant names vary by payment rail. Formats differ by institution.

Without enrichment at the source, ingestion pipelines must build normalisation logic that breaks whenever bank description formats change.

What does a reliable financial data ingestion source require?

A reliable source for financial data ingestion delivers transactions via webhook at the moment they occur. It applies merchant identifiers and category codes before delivery. The JSON schema stays consistent regardless of which bank or payment method originated the transaction.

What Is the Financial Data Ingestion Process in Financial Platforms?

How Does Bank Data Flow Into Accounting and ERP Systems?

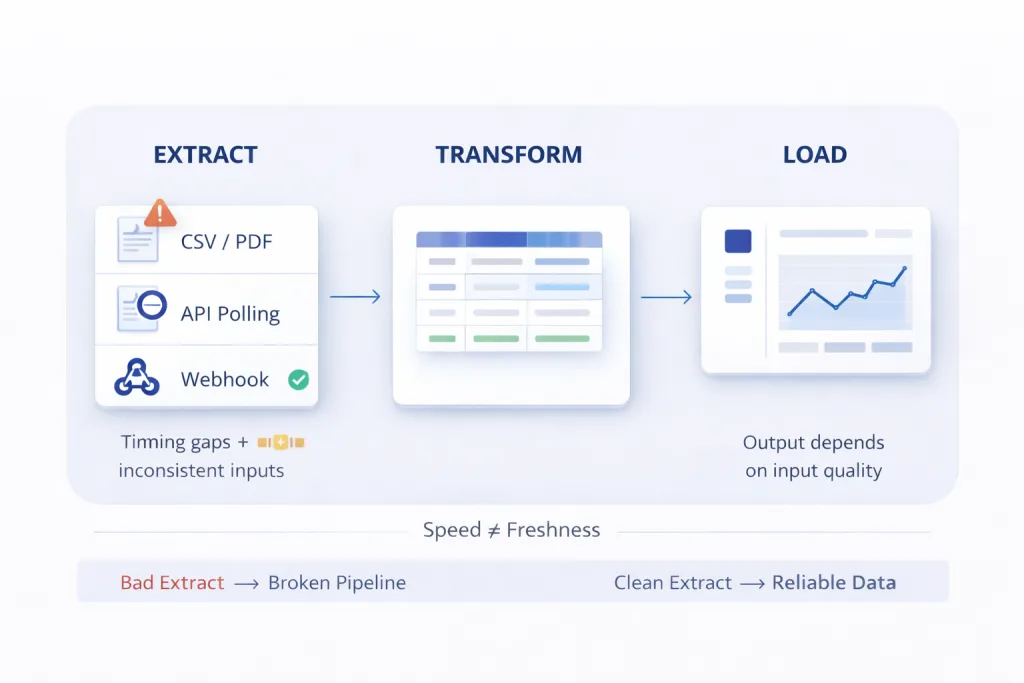

Financial data ingestion in accounting, ERP, and Lawtech platforms typically follows three stages: extraction from the bank source, transformation into a consistent schema, and loading into the platform’s data model.

The extraction stage is where most failures originate.

Bank data is extracted through one of three methods in most financial platform integrations:

- CSV exports or PDF bank statement uploads – manual, periodic, and dependent on user action

- Scheduled API polling – the system requests data from the bank at fixed intervals, typically hourly or daily

- Webhook-based delivery – each transaction triggers an event at the moment it occurs at the bank

The first two methods create timing gaps. Transactions between exports or poll cycles are invisible to the ingestion pipeline until the next extraction runs.

The data that arrives also varies in format. Descriptions, amounts, merchant fields, and date representations all differ across UK banks.

Bank integration API for financial data access covers how bank API integration architecture determines whether the extraction layer delivers consistent or fragmented data to financial ingestion pipelines.

“The ingestion pipelines I see breaking in production are almost never badly built. The schema handling is correct. The transformation logic is sound. What breaks them is the source data arriving differently than the pipeline expects – and bank data almost always does.” – Yuri, Finexer

Why Does the Financial Data Ingestion Process Break?

What Causes Bank Data Pipeline Failures in Production?

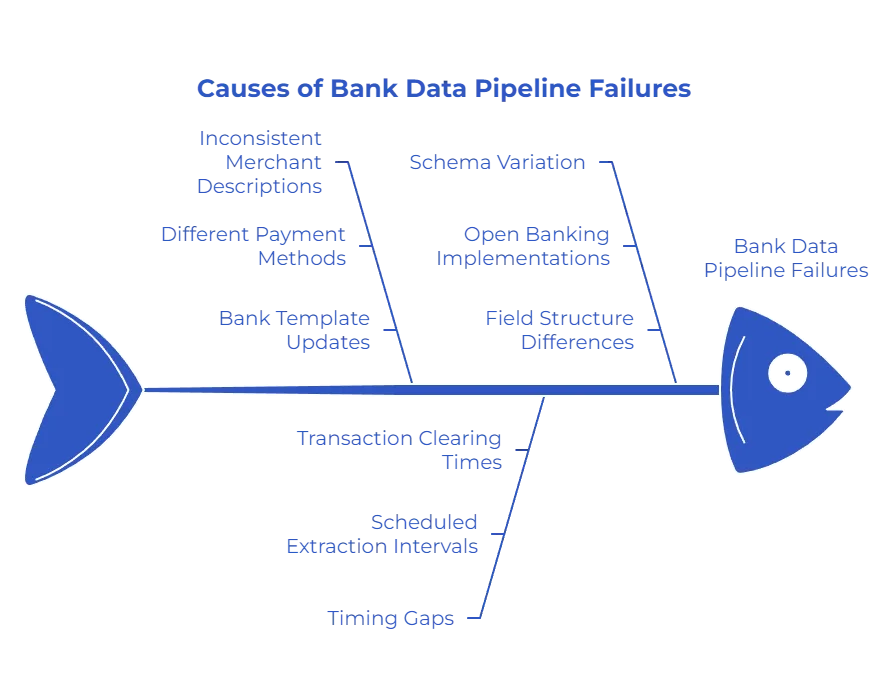

Three structural characteristics of bank data create ingestion failures that are predictable and consistent across platforms.

Inconsistent merchant descriptions – a single supplier generates different bank description strings across payment methods. BACS, Faster Payments, card, and direct debit each produce a different reference format for the same payee.

Ingestion pipelines built to match a specific description format fail when the payment method changes – or when the bank updates its description template.

Timing gaps from scheduled extraction – financial data ingestion pipelines that poll for bank data at intervals accumulate timing gaps. A transaction that cleared at 9am may not appear in the pipeline until midnight.

Cash flow reports, reconciliation triggers, and compliance checks built on this data operate on stale information.

Schema variation across banks – UK banks implement the Open Banking standard with their own field variations. Amount representations, date formats, and reference field structures differ across institutions.

A pipeline normalised for one bank’s output requires additional handling when a second bank is added.

According to Open Banking Limited’s 2025 Impact Report, over 13 million UK users now access financial services through Open Banking infrastructure. For platforms ingesting bank data across this user base, schema consistency is not a preference – it is a production requirement.

Financial data extraction API for financial services platforms covers how extraction method selection determines whether bank data arrives consistently enough for reliable downstream ingestion.

| Ingestion Failure | Root Cause | Pipeline Impact | Source Fix |

|---|---|---|---|

| Missing transactions | Scheduled polling misses transactions between cycles | Gaps in financial record, reconciliation failures | Webhook delivery triggers at transaction occurrence |

| Merchant matching failure | Same supplier appears with different description strings | Categorisation rules fail, miscategorised expenses | Merchant ID normalises all variants before ingestion |

| Schema mismatch | Different banks return different field formats | Pipeline breaks when second bank is added | Consistent JSON schema across all banks at source |

| Stale data | Batch delivery delays by hours or overnight | Reports and decisions built on outdated positions | Real-time delivery eliminates batch delay entirely |

What Does Reliable Financial Data Ingestion Actually Require?

Why Does Improving the Pipeline Not Fix a Broken Source?

The instinct when financial data ingestion fails is to improve the pipeline. Better transformation logic. More robust merchant matching. Additional normalisation rules.

This does not fix the problem.

The pipeline processes what it receives. If the source delivers inconsistent descriptions, matching logic fails – regardless of sophistication.

If the source delivers data in batches, the pipeline produces stale outputs – regardless of processing speed. If the source schema varies by bank, the normalisation layer expands – regardless of how carefully it was built.

Reliable financial data ingestion requires fixing the source, not the pipeline.

Smart data infrastructure for financial platforms covers how source data quality determines downstream financial workflow reliability across accounting and ERP platforms.

How Does Finexer Enable Reliable Financial Data Ingestion?

What Does Finexer’s Bank Data Access Provide for Ingestion Pipelines?

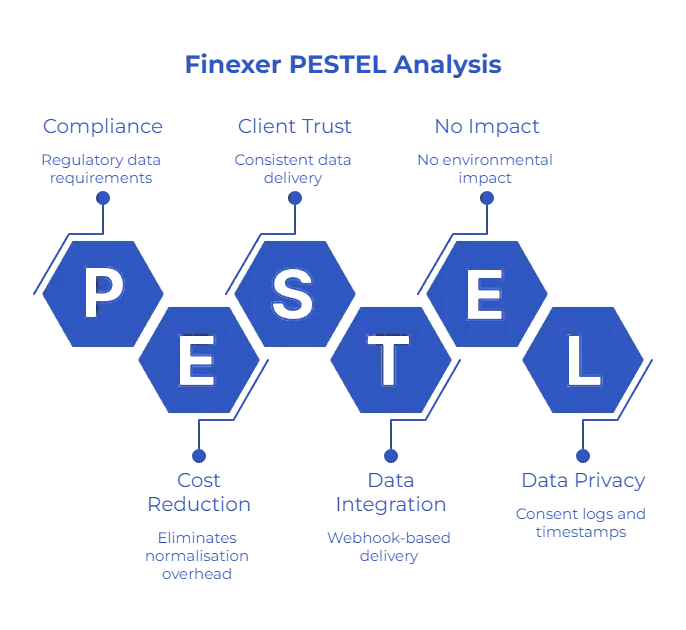

The problem: financial data ingestion pipelines break because bank transaction data arrives inconsistently. Finexer’s FCA-authorised Open Banking APIs provide access to structured, enriched bank transaction data – giving ingestion pipelines a reliable, consistent source to retrieve from.

- Real-time webhook delivery – each transaction delivered at the moment it occurs, no polling gaps

- Merchant IDs per transaction – consistent merchant identification regardless of payment method or bank

- Category codes per transaction – expense classification applied at source before ingestion

- Structured JSON – consistent schema across almost all major UK banks

- Up to 7 years of transaction history – complete historical data for backfill and period ingestion

- Consent logs and access timestamps per retrieval – audit trail for compliance-sensitive pipelines

- Multi-account access in one consent flow

Open Banking financial data platform integration covers how Open Banking API access integrates into financial data platform architectures as the structured source layer for downstream ingestion.

“Finexer delivers structured bank transaction data to the ingestion layer – with merchant IDs, category codes, and a consistent schema already applied. The pipeline retrieves data it can immediately process, rather than data it needs to normalise first.” – Yuri, Finexer

What I Feel

Every financial data ingestion conversation starts with the pipeline. Engineers describe the transformation logic, the schema handling, the retry architecture.

The conversation changes when we look at what is arriving at the top of the pipeline.

Inconsistent. Delayed. Formatted differently from yesterday. The problem was never the pipeline.

Common Use Cases

Accounting SaaS Platforms

Accounting platforms ingesting client bank data through CSV exports or scheduled feeds accumulate timing gaps and description inconsistencies. These break categorisation workflows across every client account.

Finexer’s webhook-based bank data delivery provides a consistent, enriched source that ingestion pipelines can process without source-level normalisation logic.

ERP Platforms

ERP financial modules ingesting multi-bank transaction data face schema variation across institutions. Each new bank added multiplies the per-bank normalisation overhead.

Finexer’s consistent JSON output across virtually every major UK bank eliminates this entirely.

Lawtech Platforms

Lawtech platforms ingesting client financial data for source of funds analysis need complete, auditable transaction records.

Finexer’s AIS delivers up to 7 years of transaction history with consent logs and access timestamps – providing both the data depth and the audit trail that compliance-sensitive pipelines require.

What is financial data ingestion?

Financial data ingestion is the process of extracting transaction and account data from source systems – typically banks – and loading it into accounting, ERP, or analytics platforms. Reliable ingestion requires consistent source data, real-time delivery, and structured transaction metadata that downstream workflows can process without manual normalisation.

Why does the financial data ingestion process break in accounting platforms?

Financial data ingestion breaks because bank transaction data is inconsistent at the source. Merchant descriptions vary across payment methods. Scheduled polling creates timing gaps. Schema formats differ across banks. The pipeline cannot correct source-level inconsistency – it can only process what it receives.

How does Open Banking API access improve financial data ingestion?

FCA-authorised Open Banking APIs retrieve bank transaction data with merchant identifiers, category codes, and a consistent JSON schema – per transaction, at the moment it occurs. Ingestion pipelines receive structured source data rather than raw bank descriptions. This eliminates the normalisation overhead that causes downstream failures.

Build financial data ingestion pipelines on structured, enriched bank transaction data.